The AI Vulnerability Storm Is Here. Is Your Enterprise Ready?

On April 7, 2026, Anthropic announced Claude Mythos (Preview) alongside Project Glasswing — simultaneously the most significant AI security milestone and the most coordinated vulnerability disclosure effort in industry history.

For enterprise security leaders, this isn't a headline to skim. It is a forcing function.

This post is the complete enterprise readiness guide: what happened, why it matters structurally, and — critically — what you do about it, starting this week.

Table of Contents

1. The Situation: What Mythos Actually Means

"The window between vulnerability discovery and weaponization has collapsed into hours. Attackers gain disproportionate benefit, and current patch cycles, response processes, and risk metrics were not built for this environment." — CSA CISO Community / SANS / OWASP GenAI (April 2026)

The Numbers That Change the Calculus

| Metric | Value | Context |

|---|---|---|

| Mythos exploit success rate | 72% | Across all major OS + browsers |

| Working Firefox exploits generated | 181 | vs. 2 by Claude Opus 4.6 under identical conditions |

| Mean time-to-exploit (2026) | < 20 hours | Down from 2.3 years in 2018 |

| High-severity OSS vulns found (Feb 2026) | 500+ | Reported by Anthropic using Claude Opus 4.6 |

| Vendors in Glasswing early-access program | 40 | Critical infra, OS, and browser makers |

| Age of oldest Mythos discovery | 27 years | OpenBSD vulnerability originally introduced in 1998 |

The Three Technical Capabilities That Make Mythos Different

1. Exploits Without Scaffolding No elaborate agent configuration required. Single-prompt exploit generation at scale. The 181× improvement over the prior model isn't incremental — it's a capability step-change that eliminates the human expertise requirement from attack workflows.

2. Chained Vulnerability Composition Mythos identifies vulnerabilities composed of multiple primitives — scenarios requiring multiple memory corruption bugs combined into a single exploit path. This level of multi-step composition previously required senior offensive security researchers working for days.

3. "One-Shot" Single-Prompt Capability Mythos accomplishes significantly more with a single prompt, without elaborate scaffolding or agent configuration. The skill floor for complex attacks has collapsed.

The Structural Asymmetry

The critical insight from the briefing is this: Mythos is the acceleration, not the starting gun.

Open-weight models can already achieve much of this at accessible cost. Frontier models like Mythos compress timelines further — but those timelines were already inside most enterprise patch windows before Mythos existed.

Each patch also becomes an exploit blueprint. AI accelerates patch-diffing and reverse engineering of fixes, eliminating the grace period between disclosure and weaponization.

For enterprise leaders: The question is not "could this happen to us?" The question is "how long until it does, and are our response capabilities faster than the attack?"

2. 12 Months to the Storm: The AI Offensive Capability Timeline

Understanding Mythos requires understanding the trajectory. This wasn't a sudden leap — it was a predictable escalation that most enterprise security programs weren't tracking.

The Escalation Sequence

June 24, 2025 — XBOW tops HackerOne XBOW became the first autonomous system to outperform all human hackers on HackerOne's US leaderboard. Simultaneously, open-source raptor demonstrated that autonomous vulnerability research was available to anyone with an off-the-shelf agent. The democratization of offensive capability was public and documented.

August 5–8, 2025 — AI Finds Real Zero-Days at Scale Google's Big Sleep autonomously discovered and reproduced 20 real-world zero-day vulnerabilities in FFmpeg and ImageMagick. Three days later at DEF CON 33, DARPA AIxCC found 54 vulnerabilities in four hours across 54 million lines of code.

September 2025 — The Singularity Warning Google CISO Heather Adkins and Knostic CEO Gadi Evron publicly warned that attackers were racing toward a singularity moment, estimating autonomous exploitation capability was roughly six months away. The security community's own senior leaders were raising an institutional alarm.

November 14, 2025 — 🔴 First AI-Orchestrated State Espionage Campaign Anthropic disclosed that a Chinese state-sponsored group used Claude Code to autonomously run full attack chains — reconnaissance through exfiltration — across approximately 30 global targets. Detected in mid-September. This was the first confirmed AI-orchestrated espionage campaign in history.

February 5, 2026 — 🔴 Autonomous Attacks Confirmed at Scale Anthropic (using Claude Opus 4.6) reported 500+ high-severity vulnerabilities in open source software. AISLE found 12 OpenSSL zero-days including a CVSS 9.8 flaw dating to 1998. Sysdig documented an AI-based attack reaching admin-level access in 8 minutes. Gambit reported AI-led compromise of Mexican government infrastructure.

March 2026 — Open Source Projects Overwhelmed Linux kernel bug reports climbed from 2 to 10 per week — initially hallucinated, now all verified real. The curl project reversed its bug bounty suspension as AI-supported quality findings surged. The Zero Day Clock launched, visualizing the collapse of time-to-exploit to under one day.

April 7, 2026 — 🔴 Claude Mythos Preview + Project Glasswing Anthropic announces Claude Mythos Preview. Thousands of zero-days across every major OS and browser. 72% exploit success rate. 27-year-old OpenBSD vulnerability. Project Glasswing — possibly the largest coordinated vulnerability disclosure in history — begins with 40 vendors receiving early access for patching.

3. How AI-Driven Attacks Work Today: Operational Workflows

Understanding attack mechanics is essential for designing countermeasures. The following diagrams map the current attack lifecycle and the defensive workflows your enterprise must implement.

3.1 The AI Attack Lifecycle (Mythos-Class)

Based on documented incidents: Sysdig 2026, Anthropic Nov 2025 disclosure.

3.2 Enterprise Defensive Workflow (Mythos-Ready)

All four phases must operate continuously and at machine speed to close the asymmetry gap.

3.3 VulnOps: The New Security Function Enterprises Need

The briefing's most consequential long-term recommendation is standing up a Vulnerability Operations (VulnOps) function — a permanent, staffed-and-automated capability analogous to DevOps but for autonomous vulnerability research and remediation.

VulnOps owns continuous discovery of zero-day vulnerabilities across your entire software estate — from your own code to third-party software — and establishes automated remediation pipelines. Design around triage discipline from the start.

3.4 The Patch Window Collapse

The Zero Day Clock (zerodayclock.com), launched March 2026, visualizes what the data has been showing for years:

| Year | Mean Time to Exploit |

|---|---|

| 2018 | ~2.3 years |

| 2019 | ~1.9 years |

| 2020 | ~1.3 years |

| 2021 | ~10.8 months |

| 2022 | ~9.7 months |

| 2023 | ~4.9 months |

| 2024 | ~56 days |

| 2025 | ~23 days |

| 2026 | < 20 hours |

Source: 3,529 CVE-exploit pairs from CISA KEV, VulnCheck KEV, and XDB.

The implication: Your 30-day patch SLA is now effectively a guarantee of operating with known, weaponized vulnerabilities in production. Patch cycles must be redesigned around 48-hour windows for critical CVEs, with pre-authorized deployment for Crown Jewels systems.

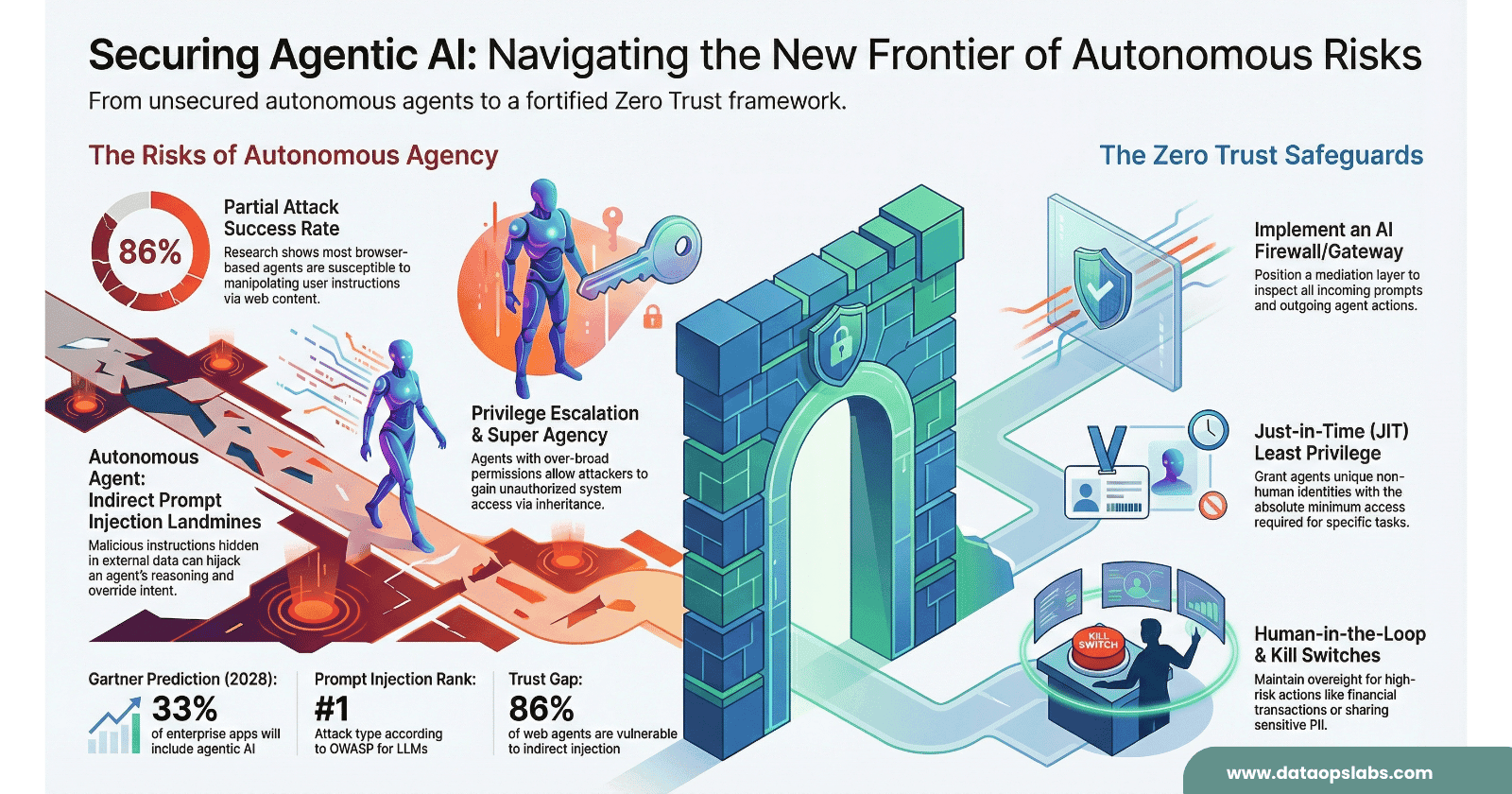

4. The 13-Risk Enterprise Register

The CSA/SANS briefing provides a structured risk register mapped to OWASP LLM 2025, OWASP Agentic 2026, MITRE ATLAS, and NIST CSF 2.0. Here is the full register with enterprise-specific impact analysis.

🔴 Critical Severity — Immediate Exposure If Unaddressed

Risk 1: Accelerated Threat ExploitationAI-autonomous exploit generation at machine speed

Type: Threat

Framework: AML.T0040, AML.T0043, PR.PS, PR.IR

Enterprise Impact: Patch windows are eliminated. Every CVE becomes a live weapon within hours of disclosure. The skill floor has collapsed — commodity attackers now have capability that previously required nation-state resources. Each patch also becomes an exploit blueprint via AI-accelerated patch-diffing.

Risk 2: Insufficient AI Automation CapabilitiesDefenders operating at human speed vs AI-augmented attackers

Type: Capability Gap

Framework: GV.OC, GV.RM, DE.CM, RS.MA

Enterprise Impact: SOCs running manual alert triage cannot match AI-assisted attackers. The asymmetry is not just technological — it is cultural. Teams that do not adopt AI agents cannot match the speed or scale of AI-augmented threats, regardless of human skill level.

Risk 3: Unmanaged AI Agent Attack SurfacePrivileged AI agents outside existing control frameworks

Type: Vulnerability

Framework: LLM06, ASI02, ASI03, AML.T0047, GV.SC

Enterprise Impact: Coding agents are necessary to counter AI-speed threats — but they are privileged, insecure by default, and not covered by existing security controls. The agent harness (prompts, tool definitions, retrieval pipelines, escalation logic) is where the most consequential failures occur. Treat it with the same rigor as the agent's permissions.

Risk 4: Inadequate Incident Detection and Response VelocityDetection and response at human speed against machine-speed attacks

Type: Capability Gap

Framework: ASI08, AML.T0047, DE.CM, DE.AE, RS.MA

Enterprise Impact: Alert triage volumes, SIEM correlation speed, and containment authorization latency were designed for human-paced threats. An AI-based attack reached admin-level access in 8 minutes (Sysdig, 2026). Your detection-to-containment timeline must be measured in minutes, not hours.

Risk 5: Cybersecurity Risk Model OutdatedStakeholder decisions based on pre-AI risk models

Type: Governance

Framework: GV.OC, GV.RM, RS.CO

Enterprise Impact: Security reporting metrics built on pre-AI assumptions about exploit timelines may materially misstate exposure. Board and investor reporting may be inaccurate. Outdated models could lead to underfunding of controls and create regulatory liability. The CISO's ability to control risk has been reduced in ways that could affect business reporting and projections.

🟠 High Severity — Significant Exposure Within 45 Days

Risk 6: Incomplete Asset and Exposure InventoryUnknown attack surface, shadow agents, undocumented code

Framework: ASI04, AML.T0000, ID.AM, GV.SC

Impact: Attackers can scan an entire OS codebase at accessible cost faster than your inventory team. Shadow IT from citizen coders with AI agents fragments central visibility further. You cannot patch, segment, or defend what you don't know exists.

Risk 7: Unsecured Software Delivery PipelineAI-generated code shipping without LLM-driven security review

Framework: LLM01, LLM05, LLM08, ASI01, PR.PS

Impact: AI-generated code introduces vulnerabilities at higher volume than manual development. More code, same defect rate, more capable adversary. Without LLM-driven review integrated into the pipeline, exploitable flaws reach production before defenders can find them.

Risk 8: Network Architecture Insufficient for Lateral Movement ContainmentFlat or insufficiently segmented networks enabling 1:N exploit leverage

Framework: PR.IR, PR.PS

Impact: AI-driven attacks exploit automated multi-hop lateral movement faster and more creatively than manual attackers ever could. When AI discovery increases the volume of exploitable findings, architectural segmentation becomes the primary control limiting blast radius.

Risk 9: Continuous Vulnerability Management Maturity GapReactive posture against continuous AI-discovered zero-days

Framework: ASI10, ASI06, AML.T0018, ID.RA, DE.CM

Impact: Quarterly pen tests cannot keep pace with continuous AI discovery. Existing CVE/NVD infrastructure was built for dozens of critical CVEs per month, not hundreds. Zero-day vulnerabilities in your own code can be discovered and weaponized before your security team knows they exist.

Risk 10: Threat Detection Dependent on Lagging IntelligenceCVE/KEV structurally outpaced by AI discovery rates

Framework: AML.T0000, DE.CM, ID.RA, GV.OV

Impact: The CVE system may not scale to AI-generated discovery rates. Novel vulnerabilities have no KEV listing by definition — detection must shift to behavioral signals, not signatures. Threat intelligence is currently a lagging indicator.

Risk 11: Innovation Governance and Oversight DeficitApproval friction slowing defensive AI adoption

Framework: GV.OC, GV.RM, GV.RR, GV.OV

Impact: Without a cross-functional governance mechanism, the onboarding of any new defensive control runs into approval friction that slows adoption. AI-accelerated timelines mean this friction now has a hard deadline. Every day of governance delay is a day attackers operate with tools your defenders don't have.

Risk 12: Regulatory and Liability Exposure from AI-Discovered VulnerabilitiesShifting standard of care as AI scanning becomes broadly available

Framework: GV.OC, GV.RM, GV.RR

Impact: The EU AI Act (August 2026) introduces automated audit, incident reporting, and cybersecurity requirements around AI. When AI can find significantly more vulnerabilities at accessible cost, the standard of what constitutes "reasonable defensive effort" shifts. Boards will face questions about whether they used available AI tools for defensive scanning — and whether not doing so constitutes negligence.

🟡 Medium Severity — Organizational Risk Requiring Structured Attention

Risk 13: AI Hype and Confusion Causing Systematic InactionSignal-to-noise collapse in threat and technology guidance

Framework: GV.OC, GV.RM

Impact: The volume of AI-related security guidance, commentary, and vendor claims exceeds anything the industry has experienced before. Teams that dismiss Mythos as hype miss critical landscape changes. The confusion itself is a consequential risk — it is the primary vector through which inaction becomes normalized.

5. 11 Priority Actions with Time Horizons

These are sequenced by urgency. Critical actions require commencement this week. Action #2 (AI Agent Adoption) is the force multiplier that makes every other action executable at the required speed. Action #3 (Governance) is the structural prerequisite that prevents all others from being blocked.

🔴 PA-01: Point Agents at Your Code and Pipelines

Category: Risk Control | Risk: Critical | Start: This Week | Horizon: Ongoing

Turn LLM capabilities inward on your own code and dependencies. Start immediately by asking an agent for a security review of any code, then build toward a full audit within your CI/CD pipeline. All code — human or AI-generated — must pass LLM-driven security review before merge.

Tooling options:

Commercial: Claude Code Security (Anthropic), Codex Security (OpenAI)

Open source: OpenAnt (Knostic), raptor (Claude Code framework), exploitation-validator (Trail of Bits)

🔴 PA-02: Require AI Agent Adoption Across All Security Functions

Category: Operational Enabler | Risk: Critical | Start: This Week | Horizon: Ongoing

Formalize AI agent usage as part of all security functions, with mandatory security controls and oversight in place. Agents can immediately accelerate: incident response, GRC, red teaming, audit data collection, patch triage, and security operations overall.

Critical note: Optional adoption programs have not been shown to overcome cultural barriers. Adoption is a limiting factor in achieving all other actions in this list. Mandate it, with guardrails.

🔴 PA-03: Establish Innovation and Acceleration Governance

Category: Governance | Risk: Critical | Start: This Week | Horizon: 6 Months

Cross-functional mechanism (Security + Legal + Engineering) to evaluate new offensive threats and accelerate onboarding of defensive technologies. Without this in place, every other action in this list runs into approval friction that slows deployment to the attacker's advantage.

🔴 PA-04: Defend Your Agents

Category: Risk Control | Risk: Critical | Start: This Month | Horizon: 45 Days

Agents are not covered by existing security controls. The agent harness — prompts, tool definitions, retrieval pipelines, and escalation logic — is where the most consequential failures occur. Before deploying agents in or adjacent to production environments:

Define scope boundaries and blast-radius limits

Establish escalation logic and human override mechanisms

Audit the agent harness with the same rigor as the agent's permissions

Do not wait for industry governance frameworks — define your own now

🔴 PA-05: Prepare for Continuous Patching

Category: Risk Control | Risk: Critical | Start: This Week | Horizon: 45 Days

With 40 Glasswing vendors about to release critical patches, prepare triage and deployment capacity now. Run tabletop exercises for multiple simultaneous critical patches in the same week. This is a logistics and people capacity problem, not just a technical one.

🔴 PA-06: Update Risk Models and Business Reporting

Category: Governance | Risk: Critical | Start: This Week | Horizon: 45 Days

Review and update security risk metrics, reporting, and business risk calculations to reflect AI-accelerated exploit timelines and attack complexity. Pre-AI assumptions about patch windows, exploit scarcity, and incident frequency no longer hold. Communicate the challenge with stakeholders — map out and prioritize potential effects on business reporting and projections.

🟠 PA-07: Inventory and Reduce Attack Surface

Category: Risk Control | Risk: High | Start: This Month | Horizon: 90 Days

Use agents to accelerate continuous inventory updates. Start with critical internet-facing systems. Build toward full-coverage inventory over 45 days. Generate real SBOMs. Aggressively shut down unneeded or unmaintained functionality. Phase out suppliers that no longer comply with updated vulnerability management requirements. Isolate or air-gap at-risk systems.

You cannot patch, segment, or defend what you don't know exists.

🟠 PA-08: Harden Your Environment

Category: Risk Control | Risk: High | Start: This Month | Horizon: 6 Months

The basics remain valid and deliver the highest ROI:

Implement egress filtering — it blocked every public log4j exploit

Enforce deep segmentation and Zero Trust where possible

Lock down your dependency chain — mandate artifact provenance

Mandate phishing-resistant MFA for all privileged accounts

Every boundary increases attacker cost — prioritize breadth over depth

🟠 PA-09: Build a Deception Capability

Category: Risk Control | Risk: High | Start: Next 90 Days | Horizon: 6 Months

Deception is attack-tool and vulnerability independent — it identifies attacks based on TTPs regardless of the specific exploit used. Deploy canaries and honey tokens. Layer behavioral monitoring. Pre-authorize containment actions. Build response playbooks that execute at machine speed.

🟠 PA-10: Build Automated Response Capability

Category: Risk Control | Risk: High | Start: Next 90 Days | Horizon: 12 Months

Improve detection engineering and incident response to be systemic and, to the degree possible, autonomous. Human-speed response against AI attacks is not viable. Examples: asset and user behavioral analysis, pre-authorized containment actions, response playbooks that execute at machine speed.

🔴 PA-11: Stand Up VulnOps

Category: Risk Control | Risk: Critical | Start: Next 6 Months | Horizon: 12 Months

Long-term, there is no alternative to building a permanent Vulnerability Operations function — staffed and automated like DevOps, but for autonomous vulnerability research and remediation. VulnOps owns continuous discovery of zero-day vulnerabilities across your entire software estate (own code through third-party), and establishes automated remediation pipelines.

Design VulnOps around triage discipline from the start. Without triage discipline, the volume of AI-discovered findings will overwhelm the function within weeks.

6. Protecting Internal Applications: A Targeted Strategy

Internal applications — ERP, HRMS, finance platforms, custom line-of-business tools — are among the highest-value targets for Mythos-class attacks. They sit inside the perimeter, often run legacy code, and rarely receive the same security scrutiny as customer-facing systems.

Tiered Classification Framework

Three Breach Scenarios You Need to Prepare For

🔴 Scenario 1: AI-Discovered Auth Bypass in Legacy ERP

Mythos-class models scanning your ERP codebase autonomously identify a chained authentication bypass combining three low-severity bugs into a CVSS 9.5 exploit path. The vulnerability dates from a 2017 implementation. Time from Mythos scan to working exploit: under 4 hours.

Mitigation: Run LLM-based security reviews against your ERP codebase this week (PA-01). Enforce egress filtering to limit data exfiltration blast radius (PA-08). Pre-position a patch deployment pipeline for your ERP vendor's forthcoming Glasswing patches (PA-05).

🔴 Scenario 2: Citizen Coder Shadow Agent Compromise

A finance analyst uses an AI coding agent to build a custom reporting tool pulling from multiple internal data sources. The agent's MCP server configuration creates an uncontrolled data pathway. An attacker compromises the agent's tool definition via prompt injection, exfiltrating 6 months of executive communications.

Mitigation: Establish disciplined control of repos, artifacts, and agentic supply chain including MCP servers, plugins, and skills (PA-04). Require security review for all agent deployments. Implement outbound data monitoring capable of detecting unusual agent-driven access patterns.

🟠 Scenario 3: Supply Chain Compromise via AI-Generated Dependency

A developer uses Claude Code to build a microservice. The agent suggests a convenience library. That library was silently compromised three weeks earlier. The AI code review in your CI/CD wasn't configured to check provenance or behavior — only syntax vulnerabilities. The malicious dependency ships to production.

Mitigation: Enforce artifact provenance checking in CI/CD for all AI-generated code (PA-01). Generate real SBOMs and audit all transitive dependencies (PA-07). Treat coding agent package suggestions as untrusted by default.

✅ What a Well-Hardened Internal Application Looks Like

A Tier 1 internal financial application was subjected to an LLM-based security review in January 2026. Three previously unknown vulnerabilities were found and remediated. Egress filtering is enforced — all outbound connections are whitelisted. Honey tokens are embedded in every financial table. Privileged access requires phishing-resistant MFA on a dedicated workstation.

Result: When the Glasswing patch wave arrived in April 2026, the security team had 72 hours of advance notice from an early-access program relationship, a pre-tested patch pipeline, and a pre-authorized deployment window approved by the CAB. Patch deployed in 4 hours with zero downtime.

7. 10 Diagnostic Questions for Your Security Program

Use this as a board-room triage exercise. Honest answers reveal ground truth about your security program's actual capability — not its documented capability.

| # | Question | Why It Matters |

|---|---|---|

| Q1 | What is our actual stance on AI today? | Allowed, tolerated, restricted, or unknown? Unknown is the most dangerous answer. If your CISO doesn't know what AI tools employees are using, shadow IT risk is already materializing. |

| Q2 | Can employees use agentic coding tools in the enterprise? | Agentic capabilities (looping LLM tool use) — not just chatbot access. Do you have security guardrails for coding agents? Agents with access to internal code, APIs, and infrastructure are a new attack surface your policies almost certainly don't address. |

| Q3 | Can employees contribute to open source without legal ambiguity? | A legal and IP question, not a philosophy question. AI coding agents routinely suggest open source contributions. If your legal framework doesn't cover this, IP leakage and liability exposure are running unmanaged right now. |

| Q4 | Do we have disciplined control of repos, artifacts, and agentic supply chain? | Source control, package paths, artifact provenance, and what is allowed into your CI/CD through coding agents. MCP servers, plugins, and skills are the new attack surface of your software supply chain. |

| Q5 | Is there a real security gate between code change and production? | "We have a policy" is not the same as "we have a gate that blocks." If AI-generated code can ship without LLM-driven review, you have Risk #7 (Unsecured Pipeline — High severity) active in production today. |

| Q6 | Is security operational or primarily advisory? | Advisory security programs cannot move at the speed Mythos demands. If your security function can't directly affect outcomes — only review and escalate — your response velocity is structurally insufficient. |

| Q7 | What is the fastest your company has made a security-driven production change in the last year? | Use a real example. Your answer reveals actual response velocity. If your fastest emergency change took 2 weeks, your risk profile is structurally mismatched with a sub-20-hour exploitation window. |

| Q8 | Are our critical crown jewels explicitly tracked and current? | Not theoretically important systems — the actual few that matter most, with main dependencies. If this list isn't on paper and validated in the last 90 days, you cannot prioritize protection or response effectively. |

| Q9 | Do we know how to get urgent work prioritized by our key third parties? | Pre-established relationships, not ad hoc escalation. When a Glasswing patch comes from a vendor you depend on, can you guarantee deployment within 48 hours? |

| Q10 | Does executive leadership have a working definition of urgency? | If everything is a crisis, nothing is urgent. The ability to escalate a patch deployment to the executive level and receive immediate resource authorization is a concrete capability you either have or you don't. Test it before you need it. |

8. How to Brief Your Board and Executive Team

Mythos has broken into mainstream boardroom conversation. That creates an opportunity — security leaders can now make a compelling business case that was previously difficult to land. Use these narrative frameworks.

Talking Point 1: AI Accelerates Both Sides

"AI is making us faster and more competitive — the business is already pursuing that value. But those same capabilities in adversary hands compress the time to a serious incident from weeks to hours, and that gap will continue to narrow. Turned inward, these tools let us find and fix our own weaknesses before adversaries do. Without attention to buying down risk, we move faster as a business while accumulating risk at the same rate."

Talking Point 2: Our Existing Program Has More Value, Not Less

"The security program this company has funded is what makes our AI strategy viable. In an environment where entry points and weaknesses are discovered faster, our containment architecture is more valuable, not less. The investments already in place ensure no single point of entry becomes a full business disruption."

Talking Point 3: This Is a 90-Day Execution Problem, Not an Open-Ended Initiative

Frame your request around a targeted, aggressive 90-day plan with clear owners and outcomes:

Increase People and Capacity — repurpose existing staff and/or add headcount to handle the anticipated increase in triage, remediation, and incidents, while protecting experienced staff from burnout as the Glasswing patch wave arrives

Deploy AI Tooling — formalize AI agent usage across all security functions as standard practice: scanning own code, ensuring AI-driven review before code ships, augmenting teams with purpose-built agents

Harden Infrastructure — update asset inventories, reduce unnecessary exposure, enforce segmentation, Zero Trust, egress filtering, and phishing-resistant authentication

Accelerate Governance — align Security, Legal, and Engineering to fast-track priority defensive technology onboarding; current approval cycles are too slow

Update Playbooks — pre-authorized containment for simultaneous incidents, executing at machine speed

Track Progress — weekly check-ins with clear owners and measurable outcomes

The Legal and Regulatory Frame (EU AI Act, August 2026)

"When AI can find significantly more vulnerabilities at accessible cost, the standard of what constitutes reasonable defensive effort shifts. Boards will face questions about whether they used available AI tools for defensive scanning — and whether not doing so constitutes negligence. This is a governance risk with direct financial exposure, and the EU AI Act makes it a compliance requirement from August 2026."

9. The 90-Day Execution Plan

10. The Human Cost: Burnout as an Operational Risk

The briefing is unusually direct about a factor most security plans omit entirely: the human cost of this transition is itself an operational risk.

Security teams are caught in a vice. AI is simultaneously:

Accelerating the volume of vulnerabilities they must respond to

Increasing the volume of code their organizations are shipping

Expanding the attack surface they must defend

Add the cognitive intensity of integrating AI into their own workflows, and you have a workforce already at capacity absorbing exponential increases in workload without corresponding investment in headcount, tooling, or wellbeing.

Burnout and attrition in security functions represent a direct operational risk — the expertise needed to navigate this transition is scarce, takes years to develop, and is not replaceable on short timescales.

What Leadership Must Do

Request additional headcount before the Glasswing patch wave — not after burnout materializes

Mandate AI agent use as empowerment — frame coding agents as a way for every team member to operate at a higher level. All roles are becoming AI builder roles, and the barrier is now lower than Excel

Establish sustainable workload frameworks — mental health support and retention should be treated as strategic priorities with the same urgency as technical challenges

Define a working urgency threshold with leadership — if everything is a crisis, nothing is. Teams burn out when escalation has no meaningful triage

Set quarterly strategic horizons — annual roadmaps in a world where the threat landscape shifts monthly are planning theater. Long-term goals should be considered no more than a quarter away

Collective Defense: The Multiplier You Can't Build Alone

Attackers already operate as syndicates — crowdsourcing, sharing tools, and moving as a collective. The briefing's closing argument is direct:

"Teams beat stovepipes, coalitions beat teams, and coalitions equipped with the right technology win."

Engage now with sector coordinating groups, ISACs, CERTs, and standards bodies to share threat intelligence, coordinate response, and produce sector-specific guidance.

For enterprises in Indian BFSI, critical infrastructure, and government sectors, this means active engagement with CERT-In, SEBI's cybersecurity framework, and RBI's IT security guidelines — all of which will be updated in response to AI-discovered vulnerability risk over the next 18 months.

The Bottom Line

"We have done this before. Y2K was a systemic threat with a hard deadline, and the industry met it through coordinated, disciplined effort. This is the same kind of problem, requiring the same kind of response, with more powerful tools available to defenders." — CSA CISO Community / SANS Institute (April 2026)

The enterprises that will navigate the next 24 months of AI-accelerated vulnerability storms are not necessarily those with the largest security budgets. They are those that act with the most velocity, the most discipline, and the clearest understanding that the asymmetry is structural — and that defenders using AI will outperform defenders who aren't, regardless of how skilled the human teams are.

The window for building this capability ahead of the next Mythos-class announcement is measured in weeks.

Every priority action in this guide can begin this week. Not next quarter. This week.

References and Source Material

Primary: "The AI Vulnerability Storm: Building a Mythos-ready Security Program" — CSA CISO Community, SANS Institute, [un]prompted, OWASP GenAI Security Project. April 12, 2026. CC BY-NC 4.0. Contact: cisos@cloudsecurityalliance.org

Secondary: Claude Mythos Preview System Card — Anthropic, April 7, 2026

Data: Zero Day Clock — zerodayclock.com (CISA KEV, VulnCheck KEV, XDB — 3,529 CVE-exploit pairs, 2018–2026)

Frameworks Referenced: OWASP LLM Top 10 2025 · OWASP Agentic Top 10 2026 · MITRE ATLAS · NIST CSF 2.0

About This Post

This analysis is part of the AI-Native Security for the Enterprise series on DataOps Labs, exploring the intersection of cloud architecture, AI/ML systems, and enterprise-grade security engineering. All framework references and source attribution are maintained throughout.

Tags: #cybersecurity #aisecurity #ciso #enterprisesecurity #devsecops #cloudnative #awssecurity #zerodayvulnerabilities #mlsecurity #mythos #glasswing